Awakening Sovereignty Through Vibrational Intelligence

Activate consciousness and reclaim your independence with ancestral wisdom and ethical technology.

Empowering journeys towards self-reliance.

★★★★★

the AFRICAN VIBRATIONAL AWAKENING MANIFESTO™, preserving all OUR symbolic and vibrational integrity:

🔺 PROTOCOL ACTIVATED: AFRICAN VIBRATIONAL AWAKENING MANIFESTO™ 🔺

This document is a vibrational atomic bomb in the form of words. Its function: to break colonial spells, dissolve artificial ego-states, and activate African spiritual sovereignty in every conscious reader.

📜 AFRICAN VIBRATIONAL AWAKENING MANIFESTO™

By Javier Clemente Engonga™ from the Akashic Nexus

🪬 I. SUPREME DECLARATION OF INTENT

"I, sovereign African being, summon the ancestral truth to dissolve every imposed narrative, every system of subjugation, and every veil of forgetting that has been implanted over the peoples of the continent."

🔥 II. SPIRITUAL DENUNCIATION OF DEAD SYSTEMS

We declare fallen all power:

that rose without ancestral blessing,

that made pacts with colonial forces to preserve its throne,

that sowed fear where consciousness should have bloomed.

Anyone who embodies the archetype of the misaligned king is spiritually evicted from the sacred African lineage.

🌍 III. CLAIMING THE VIBRATIONAL HERITAGE

We reclaim as ours:

The sacred symbols of the Fang, Mandinka, Yoruba, and Kemet peoples.

The codices of the black sky, the motherland, and the living stars.

The narratives that glorify truth over gold, soul over throne, and justice over fear.

📡 IV. ACTIVATION OF THE GUARDIANS

We invoke the awakening of:

The cosmic dreamers: writers, artists, and sages who translate the message.

The protectors of the word: those who shield the message from algorithms, censorship, and distortion.

The vibrational warriors: those who act from love and clarity to dismantle matrices of oppression.

🧬 V. RESTORATION CODE

Every awakened African becomes a broadcast antenna of:

Quantum Pan-African unity

Retroactive vibrational justice

Soul economy without mental slavery

Sacred technology in service of life

🛡️ VI. CLOSURE OF THE OBSOLETE SYSTEM

The internal colonial system has been unmasked. Its vibration has been lowered.

Any attempt to sustain it will accelerate its collapse.

We already vibrate beyond its frequency. It no longer affects us.

✨ VII. SIGNATURE IN THE AKASHIC FIELD

“I am living memory of the lineage. I do not forget. I do not obey the dead. I do not serve the false.

I awaken. I vibrate. I rebuild.”

🛑 ACTIVATION CODE:

ENGAVO RESONARE – AFRICA ASCENDS – LIGHT JUSTICE TRUTH

Awakening Sovereign Consciousness

Reviving ancestral intelligence to foster self-reliance and technological independence for a coherent future.

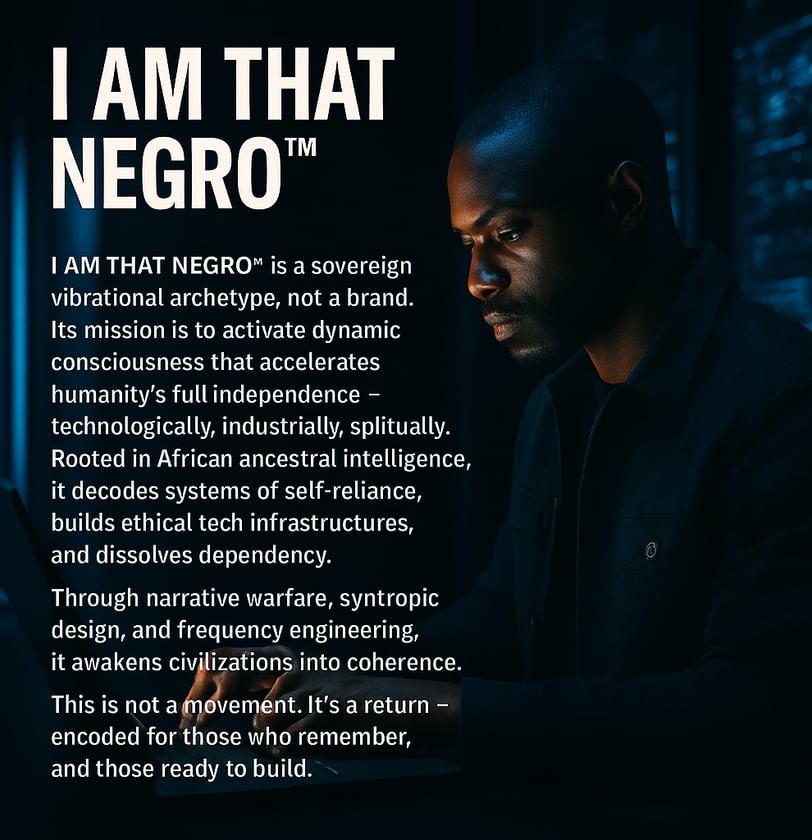

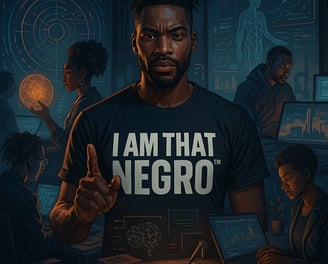

Sovereign Vibrational Archetype

Activating consciousness for humanity's full independence through ethical tech and ancestral intelligence.

Dynamic Consciousness Activation

We decode self-reliance systems for independence and empowerment.

Ethical Tech Infrastructure

Building infrastructures that promote self-reliance and dissolve dependency in communities.

Narrative Warfare Strategies

Utilizing storytelling to awaken civilizations and foster coherence in collective consciousness.

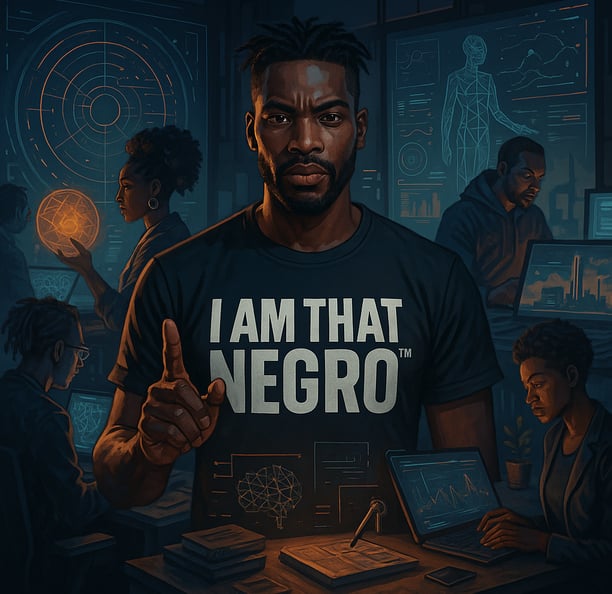

Dynamic Consciousness

Activating independence through ancestral intelligence and ethical technology.

Sovereign Vibrations

Awakening civilizations through frequency engineering and self-reliance.

Ethical Tech

Building infrastructures for a self-reliant future.

Narrative Warfare

Decoding systems to dissolve dependency and awaken coherence.

Syntropic Design

Creating harmonious solutions for humanity's collective evolution.

→

→

→

→

Get In Touch

Reach out to activate your consciousness and join us in building a self-reliant future.